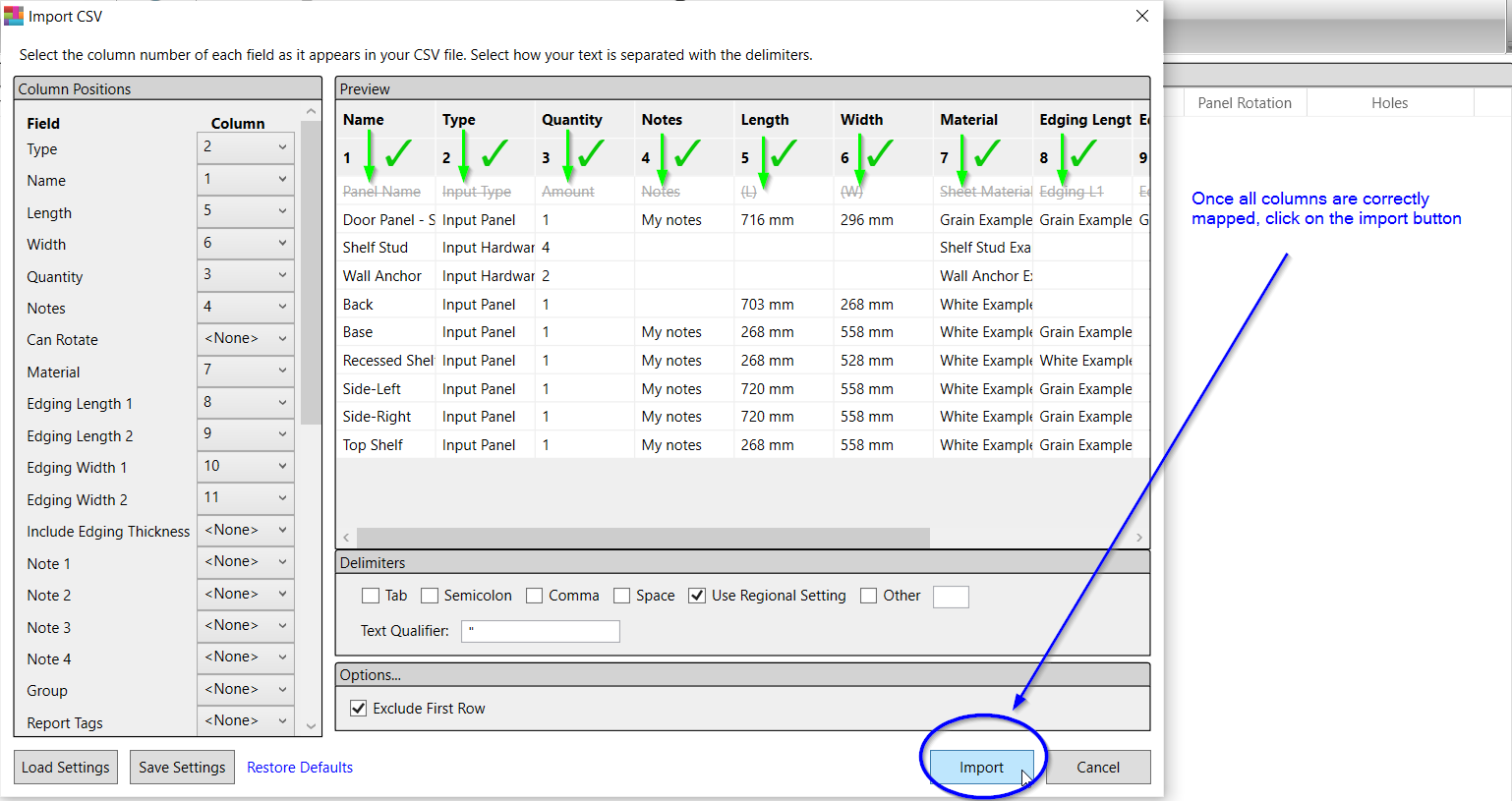

To import data from CSV files that don’t meet these rules, map the data fields in the CSV file to the Salesforce data fields, and review Mapping Data Fields. Click OK to use your mapping for the current operation. But, the issue is that this CSV column is not fixed. For example, abc.csv has a,b,D columns and tableA has A,B,D columns. Now, I want to insert the CSV files data into a database with mapping each column. It'd be very helpful to learn your CREATE SYNTAX plus some dummy data. Click Yes to confirm this action, or click No to choose another file. I also have a database table which has the same header as the name table. You wrote: "But instead, the IMPORT METHOD drop down greys out." Really the entire drop down GUI element or only the UPDATE menu item? INSERT INTO _11 ( col_0, col_1, q, qq, md5_feld, bool, id_txt)Ĭould you please try my test case if it works for you? *!40000 ALTER TABLE _11 DISABLE KEYS */ Before importing the CSV file create all fields in Database > Edit fields section. Fields must be comma-separated, lowercase ('title', not 'Title'), text fields that contain comma must be surrounded with double quotes '.'. I've just tried to reproduce your issue but without success. First line of the CSV file must contain field names. So if I can map the columns while uploading the CSV and if it can show me the lines which have errors, it could. So this cause wrong data to enter in columns. Without it, my live would be a lot more challenging! Thanks guys. The records are wrong because, the data is split into 2 or more lines, but all the lines will have the same amount of commas, just as same as the number of commas we have for heading. Each element in the mapping list defines the mapping for a specific column.

For more information, see supported data formats. Kick ass application, overall can't wait to see how it progresses. BULK INSERT is fast - and thats specifically because it doesnt support filtering, or converting, or other manipulations during insert. Use CSV mapping to map incoming data to columns inside tables when your ingestion source file is any of the following delimiter-separated tabular formats: CSV, TSV, PSV, SCSV, SOHsv, TXT and RAW. Please provide any additional information below.

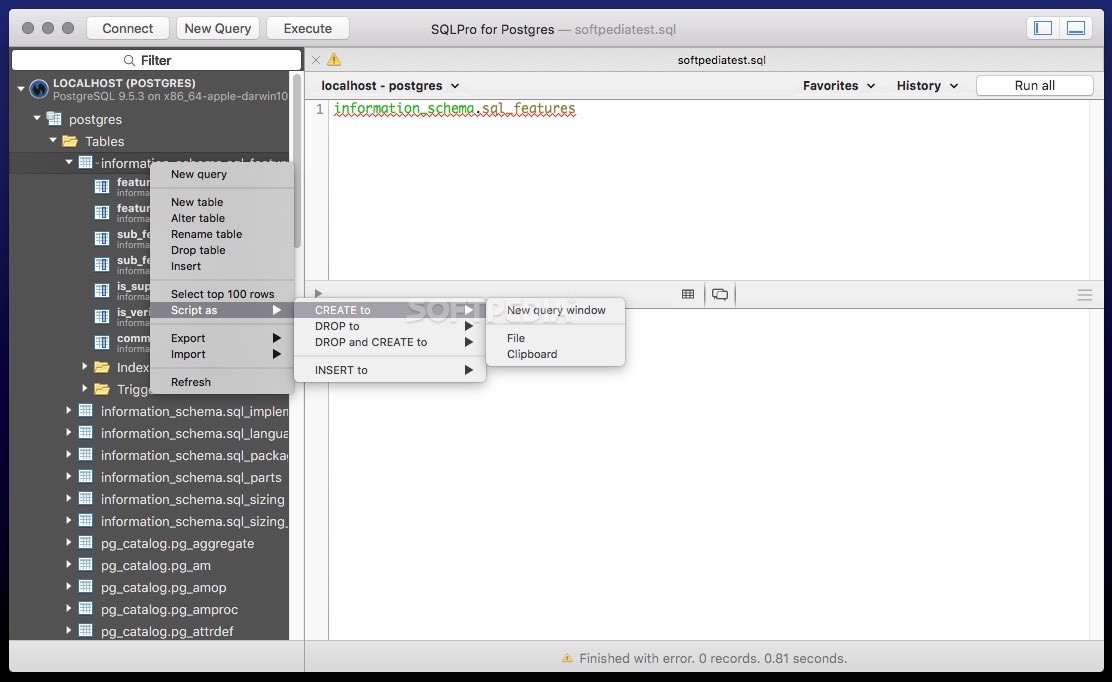

What version of Sequel Pro are you using? What version of MySQL are youĬonnecting to on the server? 0.9.8.1 build 2492 connecting to mysql 5.1.37 If I can't do this, what is the UPDATE value showing in the INPUT METHOD select input for on field mapping screen? UPDATE table SET X=Y (etc) WHERE primary_key_field = 'primary_key_value_for_this_record' You can define mappings in extension mapping documents in a comma-separated value (CSV) file, which you can then import into the catalog. I would hope to be able to run sql queries equivalent to: But instead, the IMPORT METHOD drop down greys out. It must be of a String type and have the Is ID property value set to true. During data import, elements in the model are searched by the following criteria: stereotype tag value. Text Booleans Numbers Excel data with the applied style (converted to HTML on the modeling tool) Data Mapping. I would expect to be able to run update statement via csv import when my primary key is mapped to suitable field. Importing data from CSV to the modeling tool table. What is the expected output? What do you see instead? I haven't tried this solution at my end but, i am sure that the above approach would work.Reporter: Date: 16:38:55 Status:WontFix Closed:ġ.Create existing data in table, including non-integer primary key You can follow this approach for all the CSV files. Now, the table is available you need to read the CSV file excluding the header and load the data into the above table via SQL transformation ( insert statement) created by mapping 1 Read this text file and use SQL transformation to create the tables on the database Now you have all the columns in a text file. The java transformation should normalize and split the header column into rows. Read the CSV file pass the header information to a java transformationĢ. At present, I get an email with a 50k+ row CSV daily, and want to be able to transfer that into the database without going the Sequel Pro route (IE to be. You need to do lot of coding to create a dynamic mapping on the fly.ġ. Now, use informatica to read the CSV and import all the tables and load into tables.Īpproach 3 : using Informatica. This is a manual task.Īpproach 2: Open the csv file and write formuale using the header to generate a create tbale statement. Explore that route to create tables based on csv files. PMy understanding is you have mant CSV files with different column layouts and you need to load them into appropriate tables in the Database.Īpproach 1 : If you use any RDBMS you should have have some kind of import option.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed